MCP Servers in Production: Start Narrow, Stay Auditable

MCP is useful because it names the boundary that most agent systems eventually need anyway: a server that provides resources, prompts, and tools, and a host that decides what to expose and when.1 The part people miss is that the protocol also spells out the trust problem. Hosts must get explicit user consent before exposing data or invoking tools, and implementors are told to build real authorization and consent flows because the protocol itself cannot enforce them.1

That is why “connect everything” is the wrong mental model. MCP is not a universal adapter. It is a trust boundary with a transport.

Where MCP pays off

The best MCP servers usually solve one of three problems:

- expose a narrow, typed slice of internal data

- wrap a tool behind a contract that the agent can call safely

- make an integration auditable enough that humans can review it later

That is enough. You do not need every system behind one server.

The trap is that MCP makes it easy to connect more systems than you can safely scope. Once that happens, you are no longer designing an assistant. You are designing a mixed-trust data plane.

The failure modes are not abstract

The worst production issues I see are predictable:

| Failure mode | What it looks like | Why it matters |

|---|---|---|

| Over-broad resources | A search endpoint returns docs, tickets, and secrets in one blob | The model sees more than the user should |

| Over-broad tools | One tool can read, write, and mutate because “the model can figure it out” | You create excessive agency |

| No provenance | A response is useful, but nobody can tell what source or filter produced it | You cannot audit or reproduce the answer |

| Prompt-only policy | The prompt says “respect permissions,” but the server still returns restricted data | The policy failed before the model saw the data |

OWASP’s LLM Top 10 maps directly onto these mistakes: prompt injection, insecure output handling, and excessive agency all show up fast once a server can fetch untrusted content and trigger actions from it.234

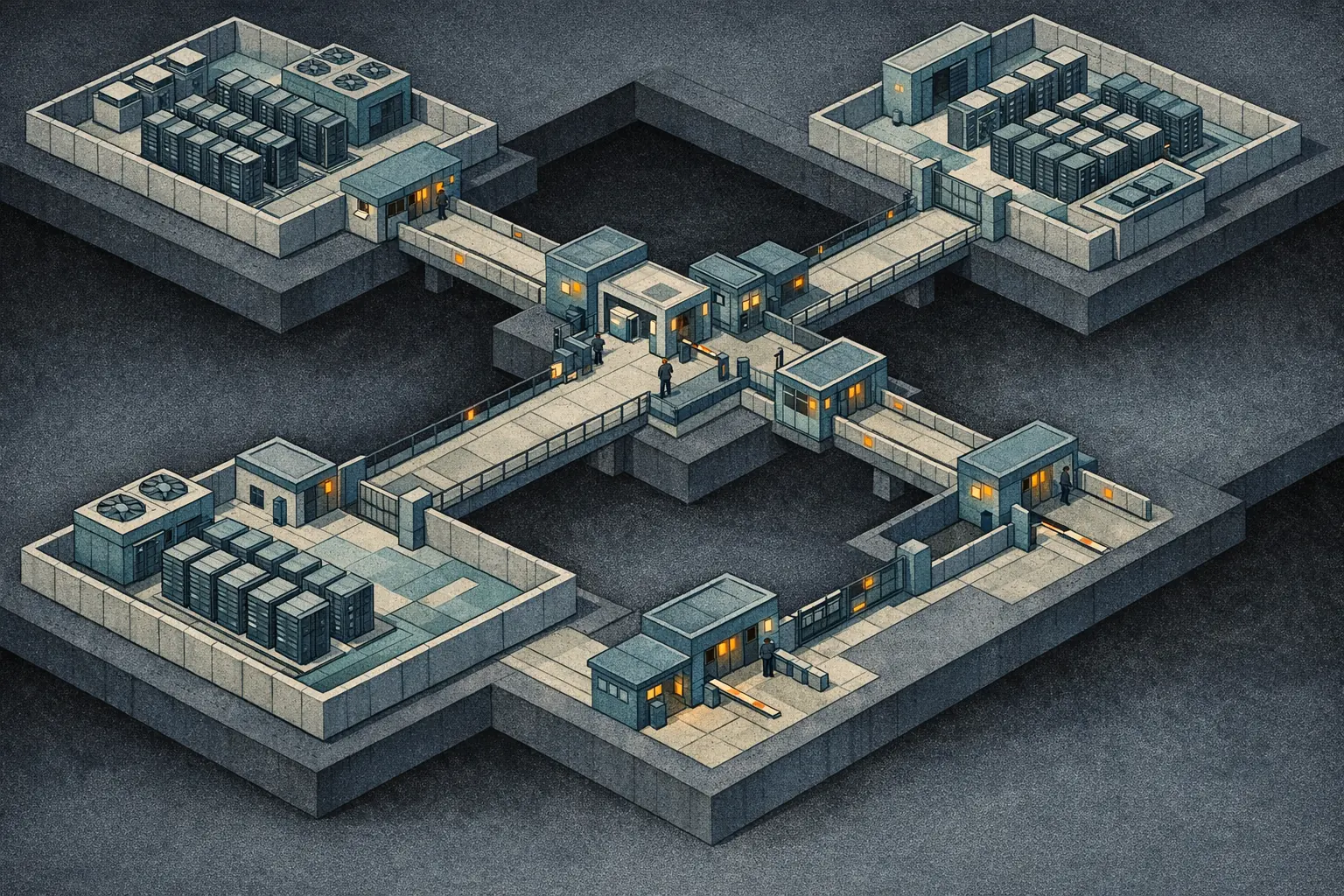

Scope the server to a trust domain

The highest-leverage design choice is boring: split servers by trust domain, not by API surface area.

Good server boundaries often look like this:

docs.readfor one workspace or product linetickets.readwritefor one support systemdeployments.readfor one operational source of truthadminseparate from user-facing tools

That approach keeps policy local. If a tool can write state, that tool should not live next to a read-only search endpoint unless both share the same trust model and the same audit needs.

The MCP spec gives you permission to do this the right way. Tools are “functions for the AI model to execute,” resources are “context and data,” and the protocol includes explicit logging and cancellation primitives.1 The spec does not say “make one giant server.” It gives you the pieces to keep boundaries explicit.

Resources deserve tighter design than most teams give them

Resource design is where accidental overexposure usually starts.

If a resource says “return everything related,” you have already lost the argument. Good resource APIs should be:

- bounded

- filterable

- tenant-aware

- versioned

- provenance-rich

That means every returned chunk should answer:

- where did this come from?

- which tenant or user scope applied?

- how fresh is it?

- what was excluded?

That information is not overhead. It is the only reason an agent answer can be audited after the fact.

Tools need explicit consent and explicit failure

The spec is unusually direct here: tools represent arbitrary code execution and hosts must obtain explicit user consent before invoking them.1 Treat that sentence as a design constraint, not as legal boilerplate.

Operationally, that means:

- separate read and write tools

- make side effects require a confirmation step

- clamp inputs server-side

- return structured errors that distinguish

not found,not allowed, andtemporarily unavailable

If a tool can mutate state, the server should make that expensive enough to think about. If the agent can trigger a destructive action with a vague natural-language request, the tool contract is too broad.

Logging is part of the contract

The spec also includes logging, error reporting, and cancellation as first-class protocol features.1 That matters because MCP systems fail in normal ways:

- the search index lags

- auth expires

- a downstream API times out

- a resource turns out to be inaccessible

When that happens, the server should log enough to answer who asked, what scope applied, what was returned, and why the request failed. Without that, you cannot tell whether the issue was permissions, freshness, or model behavior.

What I would ship first

The checklist I use is simple:

- Can I explain the server in one sentence?

- Does it sit inside one trust domain?

- Are permissions enforced on the server, not in the prompt?

- Can it return provenance for every useful answer?

- Does every write tool require explicit consent or confirmation?

- Can I audit a failure without reading a transcript by hand?

If the answer to any of those is no, the server is not ready for production.

Why narrow beats clever

MCP gets valuable only when you resist the urge to centralize everything. The more useful the protocol becomes, the more tempting it is to turn it into a junk drawer for all context and all tools. That is exactly the failure mode the spec is trying to avoid.

Small servers are easier to reason about, easier to test, and easier to delete when they prove unnecessary. That is the standard worth optimizing for.