When to Fine-Tune vs Retrieve vs Prompt

The bad decision pattern is consistent: a team has a model that is sort of right, sort of unreliable, and then reaches for the most expensive lever first.

That usually means one of three moves:

- add more prompt text

- add retrieval

- fine-tune the model

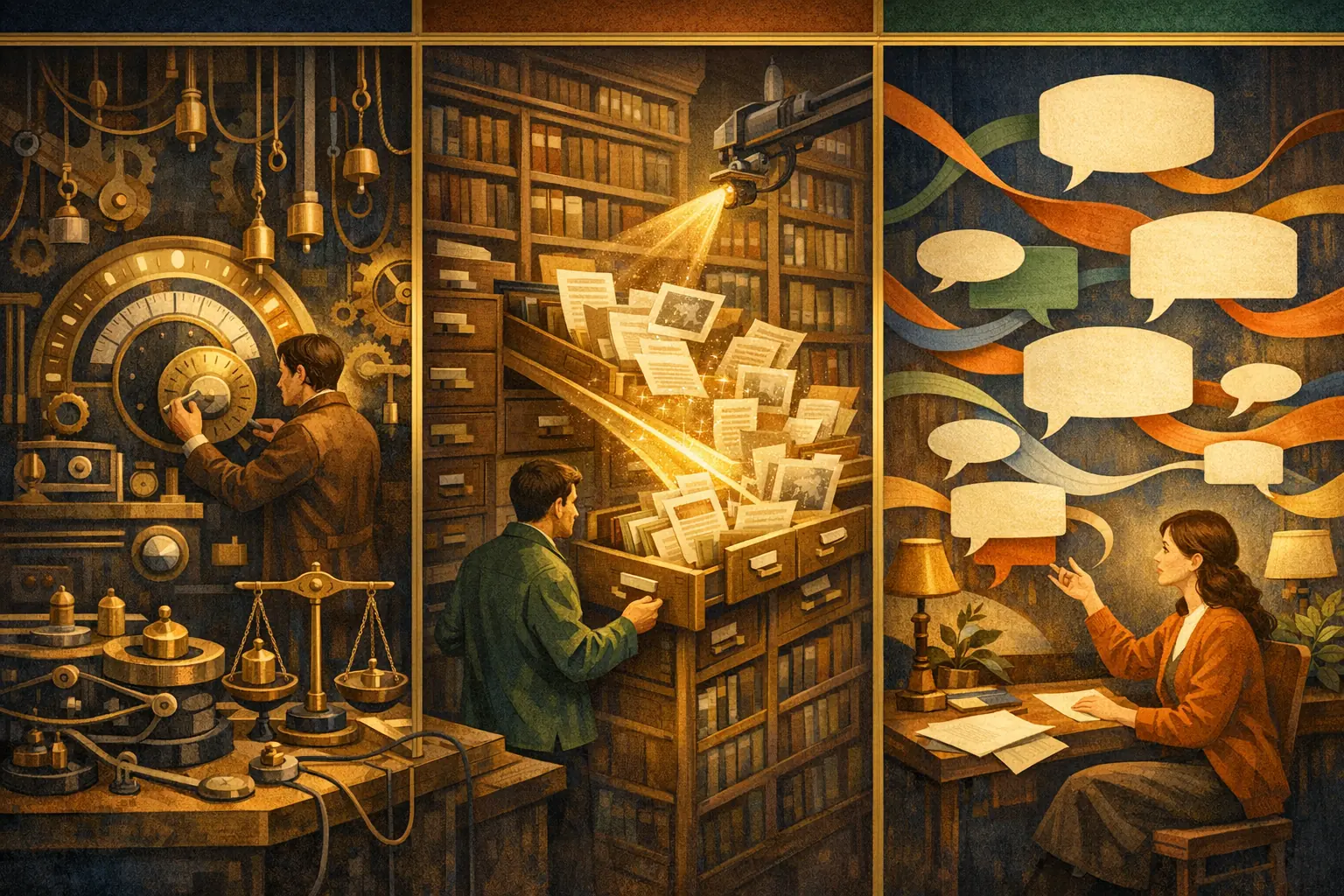

These are not interchangeable. OpenAI’s docs separate them pretty cleanly: prompt engineering is about giving the model clear instructions, goals, and context; retrieval is about semantic search over your data; and fine-tuning is about training a model to excel at a task with examples. Prompt engineering, Retrieval, Model optimization

The actual decision

I reduce the choice to one question: what is missing?

| Missing piece | The failure looks like | Best first move |

|---|---|---|

| Better instructions | The model knows the fact, but the answer is sloppy, verbose, or misformatted | Prompting |

| Better evidence | The answer depends on current, private, or tenant-scoped information | Retrieval |

| Better behavior | The model keeps making the same output or reasoning mistake across many examples | Fine-tuning |

| Better authorization | The model can see data it should not see | App-layer access control, not prompting |

If you do not diagnose the failure mode first, you will spend money on the wrong fix and then call the result “product iteration.”

Prompting is for steering, not storing

Prompting works when the model already has the capability and only needs sharper direction.

That includes:

- output shape and formatting

- tone and refusal style

- tool selection hints

- asking for clarification when inputs are incomplete

- small workflow changes that do not require durable knowledge

OpenAI’s prompt engineering guide is explicit that the prompt should provide clear goals and, where needed, extra context and examples. OpenAI Prompting

Prompting starts to fail when the prompt becomes a warehouse:

- product docs pasted inline

- policy text duplicated across routes

- long examples that mutate every deploy

- hidden behavior rules that only live in prose

At that point, you are not engineering a prompt. You are growing a fragile config blob.

Retrieval is for evidence

Retrieval is the right move when the model needs facts that live outside the base model or change faster than the model can.

OpenAI’s Retrieval API performs semantic search over your data and supports hybrid search that balances semantic and keyword matching. That is a better fit for corpora where exact terms, IDs, policy clauses, or ticket numbers matter. OpenAI Retrieval

I reach for retrieval when the task depends on:

- internal documentation

- current product or policy state

- customer-specific records

- regulated material with explicit access rules

Retrieval is also the right choice when you need to answer “where did this come from?” with something stronger than vibes. If the answer can be traced to documents, the system is easier to audit and debug.

Retrieval does not remove context design work. In fact, long-context models make the failure mode more visible. The “found in the middle” paper shows a U-shaped attention bias where tokens at the start and end of the input get more attention, regardless of relevance, and it reports RAG improvements of up to 15 percentage points after calibrating that bias. Found in the Middle

That means the job is not just “retrieve more.” It is “retrieve the right things, rank them correctly, and place them where the model is likely to use them.”

Fine-tuning is for repeated behavior

Fine-tuning pays off when the thing you are trying to fix is stable and repeated enough that examples beat instructions.

OpenAI’s fine-tuning guide is clear about the payoff: you can make a model consistently format responses, handle novel inputs in a task-specific way, use shorter prompts, and train on proprietary data without repeating it in every request. OpenAI Model optimization

Good fine-tuning candidates are usually narrow:

- classification

- extraction into a fixed schema

- domain-specific rewriting

- strict house style

What fine-tuning does not solve:

- freshness

- per-user or per-tenant permissions

- changing policy text

- knowledge that should be visible only in a scoped session

If the problem is “the model keeps being wrong because it does not see the right facts,” fine-tuning is the wrong tool. If the problem is “the model sees the facts but keeps behaving inconsistently,” fine-tuning starts to make sense.

The sequence I actually trust

The practical order is usually:

- tighten the prompt and output contract

- add retrieval if the answer depends on external evidence

- fine-tune only when the behavior is stable, measurable, and repeated

OpenAI’s Assistants migration guide makes the same separation of concerns explicit: application code handles orchestration, like history pruning, tool loops, and retries, while the prompt stays focused on high-level behavior and constraints. OpenAI Assistants migration

That split is the real reason the order matters. It keeps you from encoding infrastructure concerns into prose.

What I would not do

I would not fine-tune:

- stale facts

- tenant-scoped policy

- access control logic

- anything that changes weekly

I would not use retrieval as a substitute for clear instructions, because retrieved evidence does not tell the model what to do with it.

And I would not keep enlarging the prompt until it becomes a junk drawer. That usually means the app still does not know what it is actually responsible for.

The rule of thumb

If one good example fixes it, start with prompting.

If the answer lives in documents, records, or fresh data, use retrieval.

If the same output defect keeps showing up across many examples and routes, fine-tune.

If the issue is who can see the data, solve that in the application and retrieval layers before the model ever sees it.

That is the small set of decisions that keeps the system simpler rather than just more expensive.